SVM (Support Vector Machines)¶

The gist of SVM¶

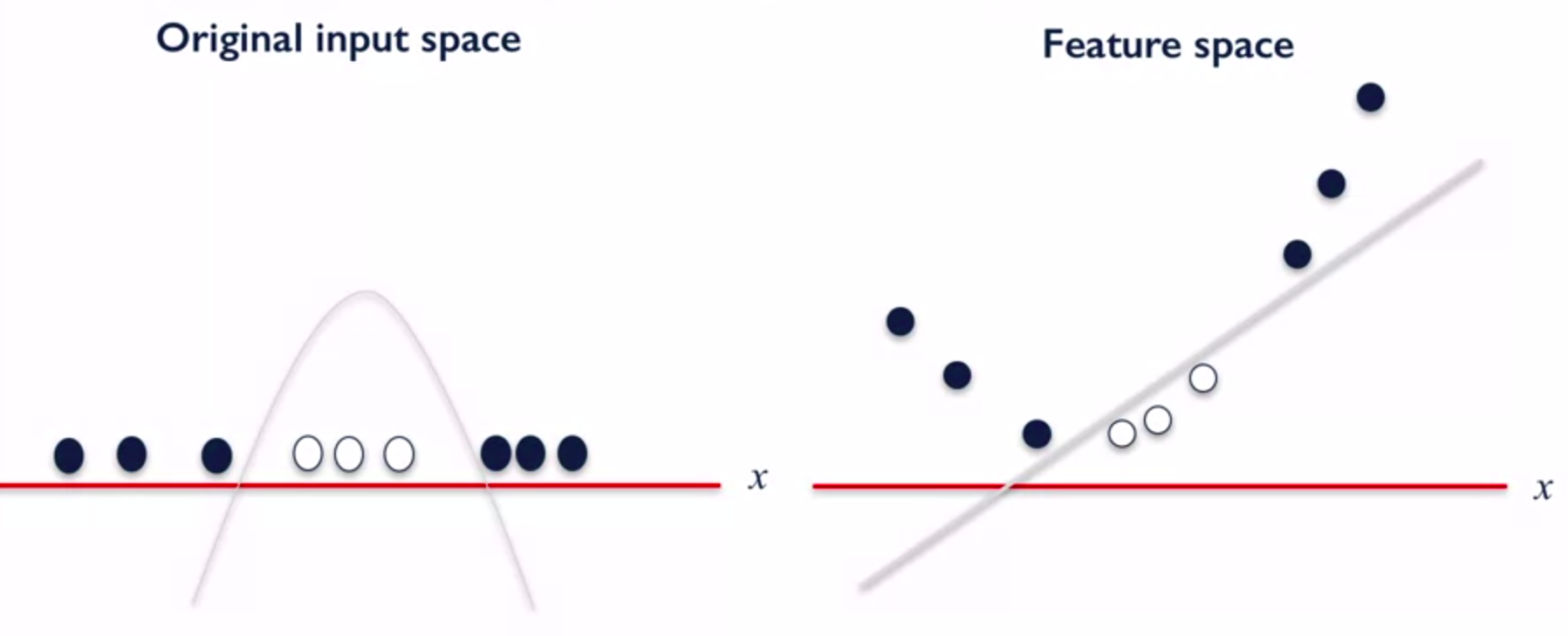

This kind of transformation resembles the polynomial transformation that was used earlier for linear regression, and frankly speaking the polynomial kernel function for SVM exists. There are different kernel functions, but the main thing about them is that they can transform original input space to the feature space in which features are linear separable.

Note

We won’t dig deep into the math of SVM, but we badly encourage you to take a look on this course made by Andrew Ng.

Handling Overfitting¶

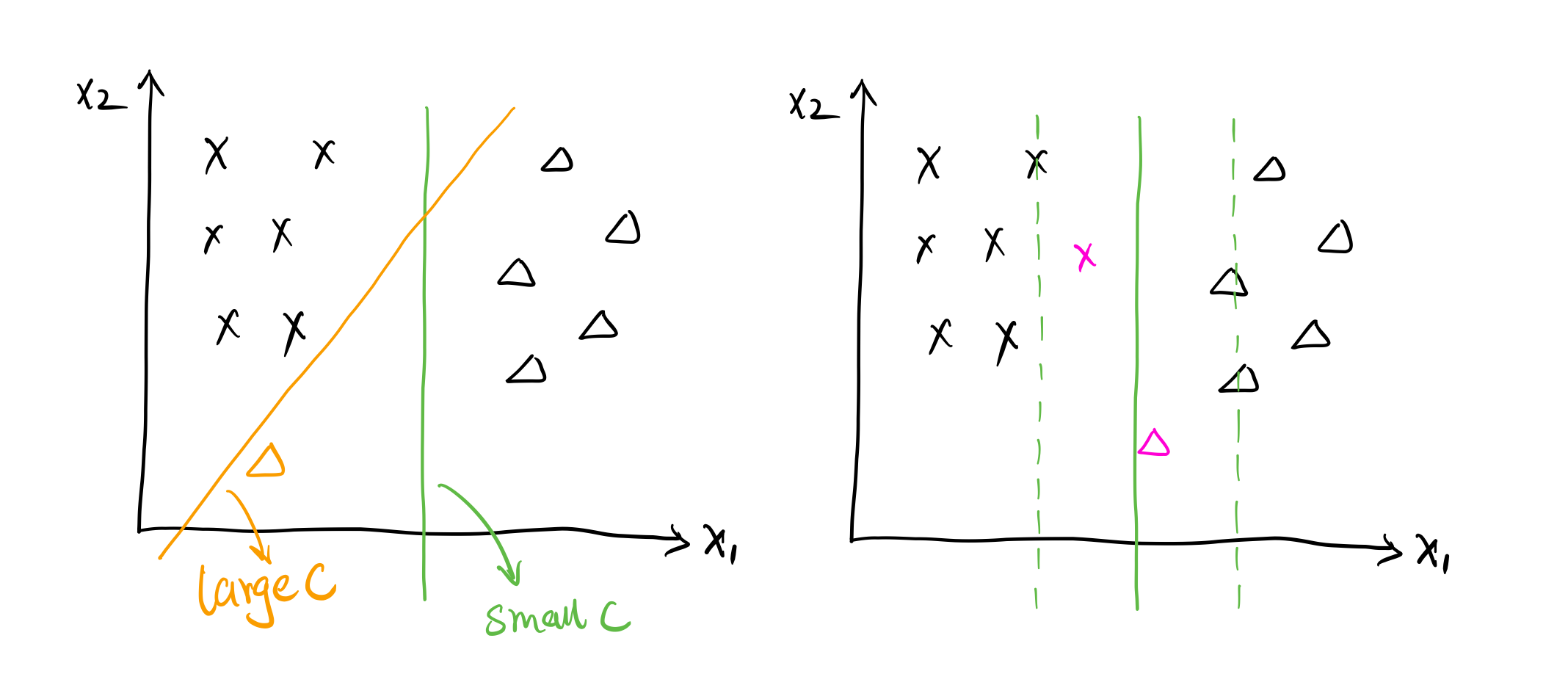

The strength of regularization in SVM is determined by C parameter. Larger values of C - less regularization, smaller - more. There is also the parameter named gamma which is applied in kernel function and is responsible for the smoothness of decision boundaries. Smaller gamma results in more points grouped together and smoother decision boundaries, larger values of gamma results in more complex decision boundary. Both parameters affect the regularization and should be chosen correctly.

Description of assignment¶

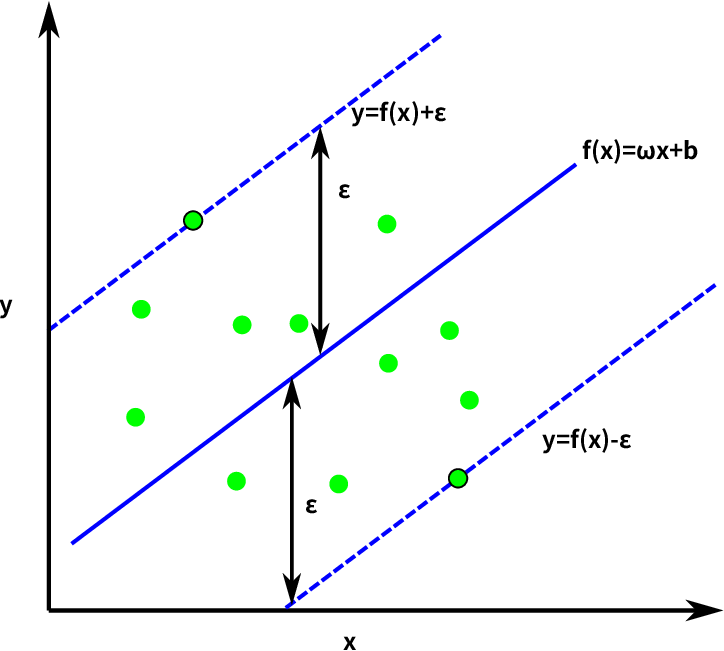

In today’s assignment you will work with SVM regressor. You will have a chance to try different kinds of kernel functions, values of C and gamma and compare the results with the previous ones.